- all

- popular

- trending

- most recent

Sound waves mimic quantum secrets

Physicists build a tunable acoustic system to study quantum ideas, using sound to mimic atoms without fragile measurements.

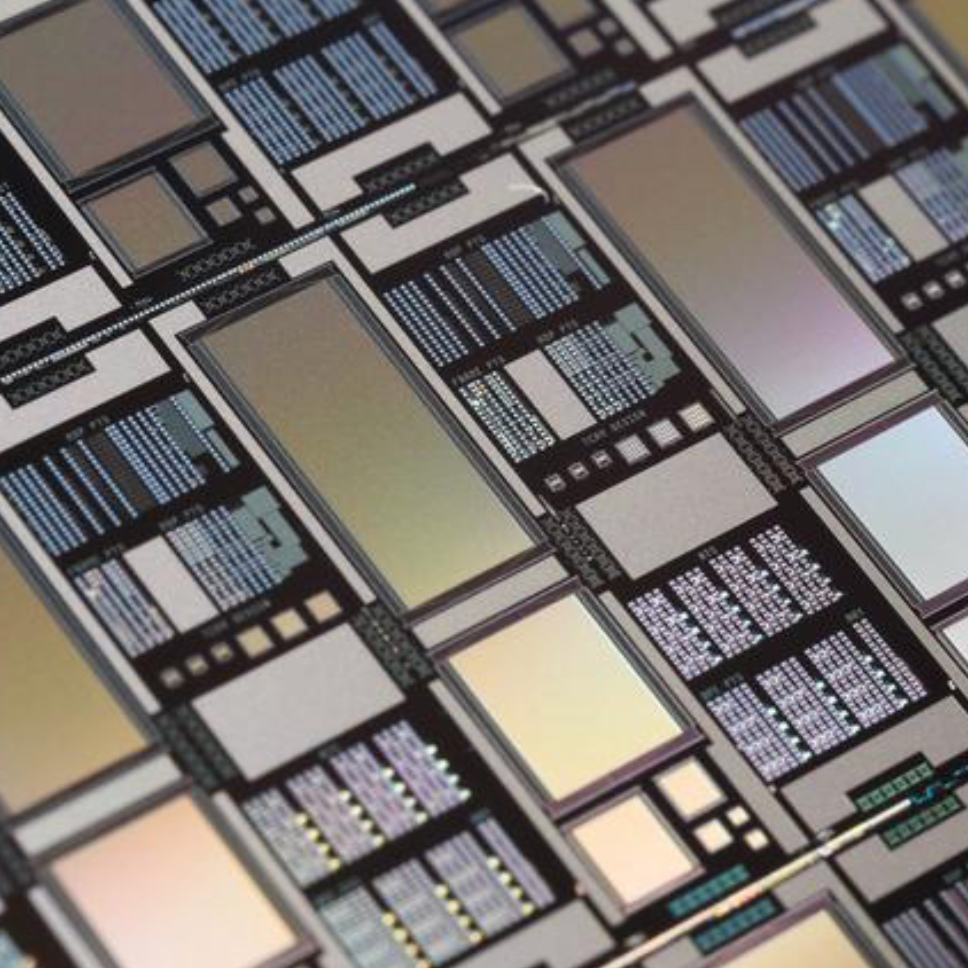

Tiny crystals, big storage: a breakthrough in computer memory

Researchers use atom-sized defects in crystals to pack terabytes of data into a tiny millimeter cube for regular computers.

Easy to use code generator for AI models could save bandwidth, memory, and computation

MIT researchers have devised a system to make AI models work better by using data redundancy and developed a code generator.

A neuromorphic computing roadmap

Researchers have mapped out a path for the development of neuromorphic computers that work like the brain.

The skin's electrical properties can reveal what we're feeling

Researchers have found that differences in human emotions are evident in skin conductance response waveforms.

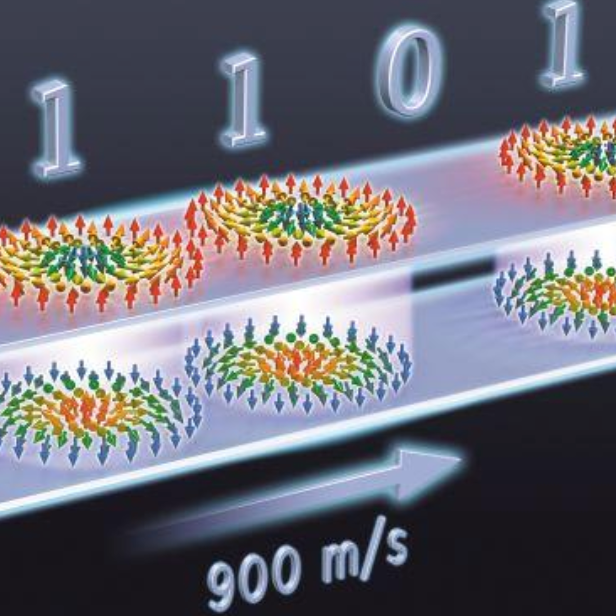

'Skyrmions' move at record speeds: a step toward future computing

Nanoscale memory bits may offer high storage capacity and low energy consumption

Analog computing solves complex equations using far less energy

Memristors can run AI tasks at 1/800th power: studies reported in IEEE Spectrum

Radical new light-wave chip design enables AI computing at speed of light

Uses high-speed light waves instead of electricity, could be adapted for use in GPUs

Twisted magnets make machine learning more adaptable, reduce energy use

Training one large AI model can generate hundreds of tons of carbon dioxide, say the researchers

.png)

.png)

.png)