AI ‘semantic decoder’ method can reveal hidden stories in patients’ minds, researchers say

May. 10, 2023.

1 min. read

10 Interactions

New hope for patients unable to speak

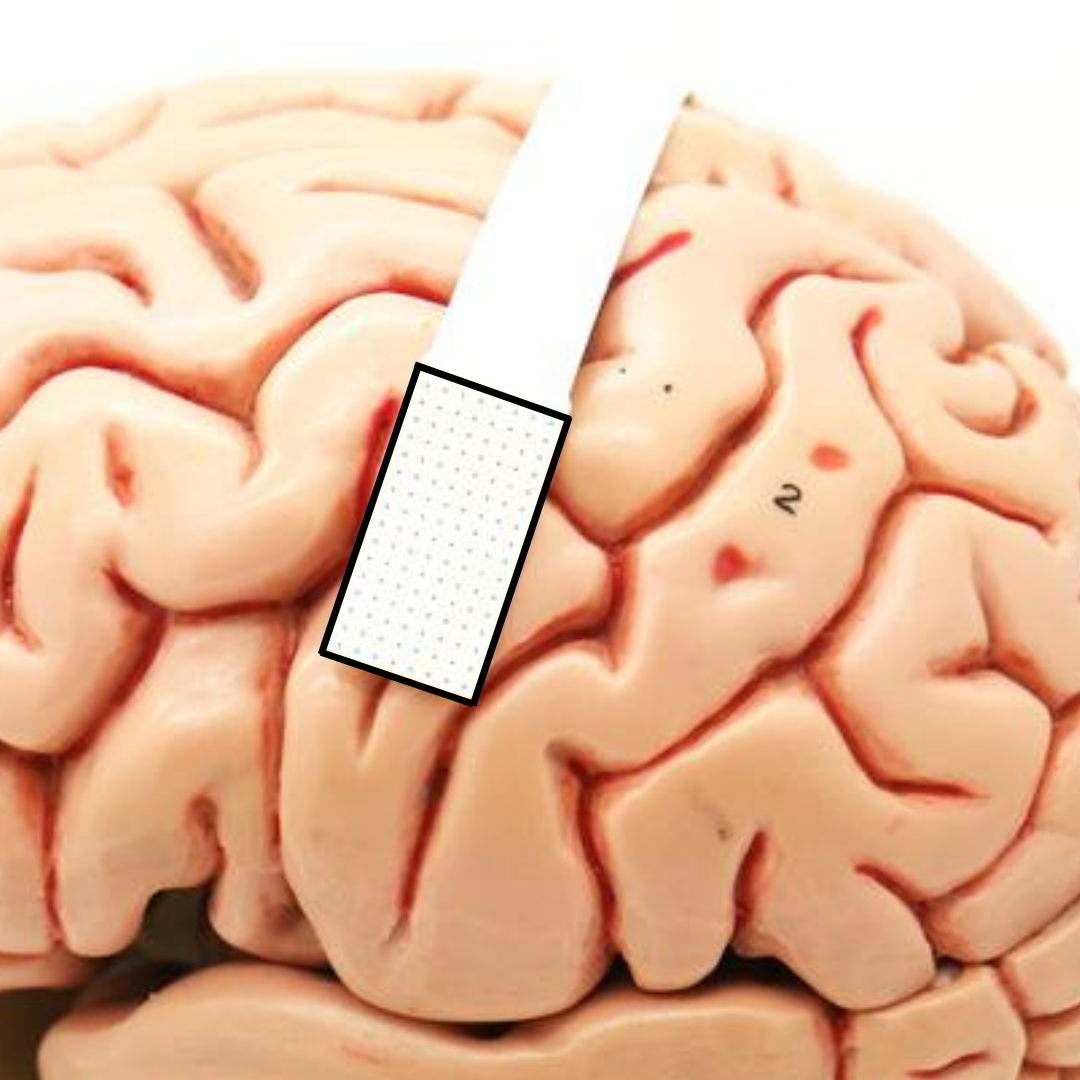

Neuroscientists have developed a new “semantic decoder” AI transformer method to help patients who have lost the ability to speak. The non-surgical system can translate a person’s brain activity — while listening to a story or silently imagining telling a story — into a continuous stream of text.

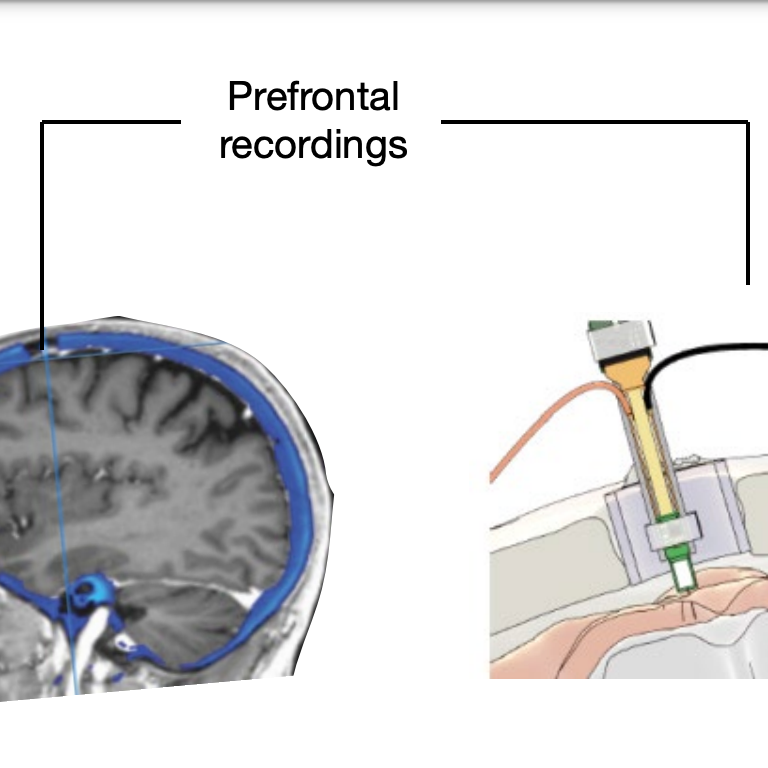

Current methods require implants and brain surgery, or else are limited to a few words, say the researchers at the University of Texas at Austin.

Semantic reconstruction

The new method is based instead on decoding words and “semantic reconstruction” of words while in an MRI machine, using “functional magnetic resonance imaging” (fMRI). The fMRI responses (blood flow and oxygen, known as BOLD), associated with specific words) were recorded while the subject listened to 16 hours of narrative stories. An encoding AI model was estimated for each subject to predict brain responses from semantic features of stimulus words.

The system is not currently practical for use outside of the laboratory because of the time needed on an fMRI machine. But the researchers are looking at using portable brain-imaging systems, such as functional near-infrared spectroscopy (fNIRS).

“We take very seriously the concerns that it could be used for bad purposes and have worked to avoid that,” study leader Jerry Tang, a doctoral student in computer science, said in a statement. “We want to make sure people only use these types of technologies when they want to and that it helps them.”

Citation: Tang, J., LeBel, A., Jain, S., & Huth, A. G. (2023). Semantic reconstruction of continuous language from non-invasive brain recordings. Nature Neuroscience, 26(5), 858-866. https://doi.org/10.1038/s41593-023-01304-9

Also see: J. Tang, Societal implications of brain decoding, Medium.

Let us know your thoughts! Sign up for a Mindplex account now, join our Telegram, or follow us on Twitter.

.png)

.png)

.png)

0 Comments

0 thoughts on “AI ‘semantic decoder’ method can reveal hidden stories in patients’ minds, researchers say”