Neuroscientists decode Pink Floyd song from brain recordings

Aug. 17, 2023.

2 min. read

25 Interactions

For people who have trouble communicating, recordings from electrodes on the brain surface could help reproduce the musicality of speech

May allow for adding musical elements to brain–computer interface (BCI) applications

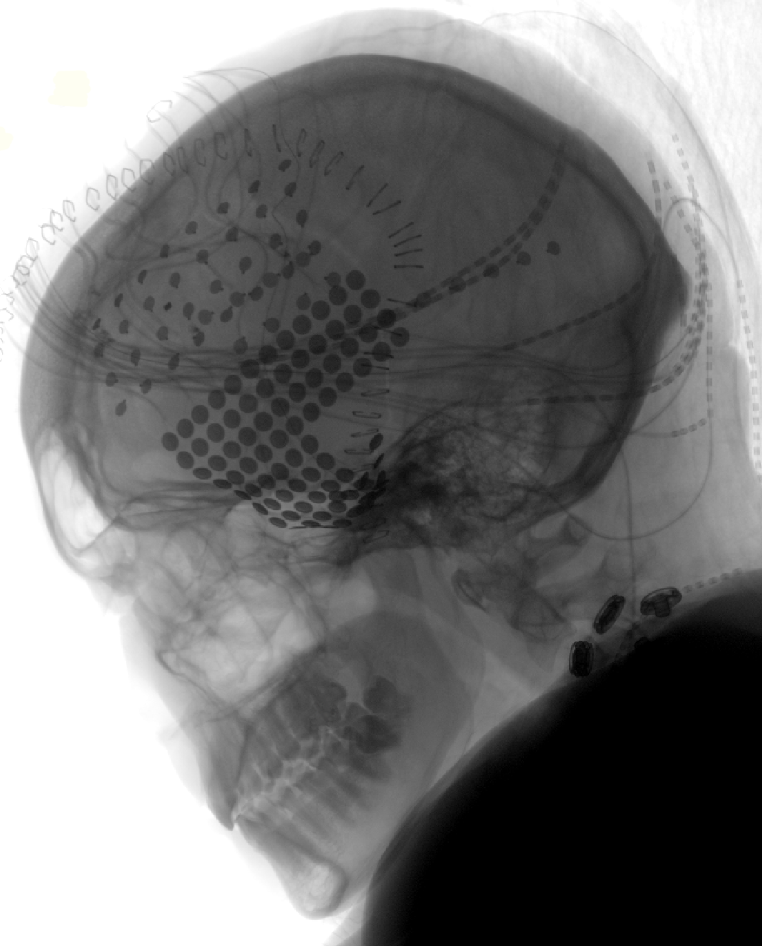

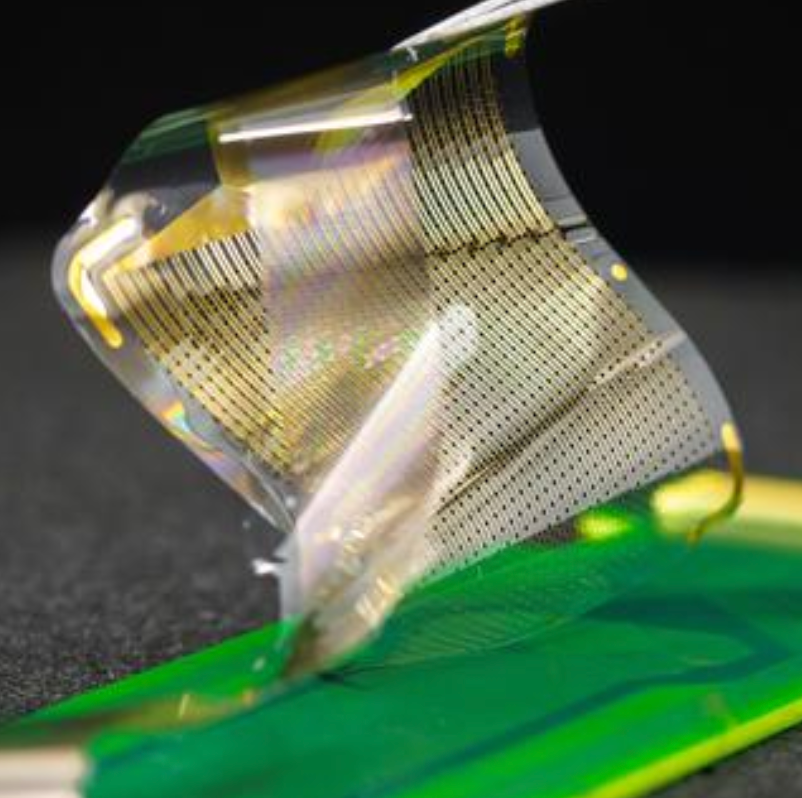

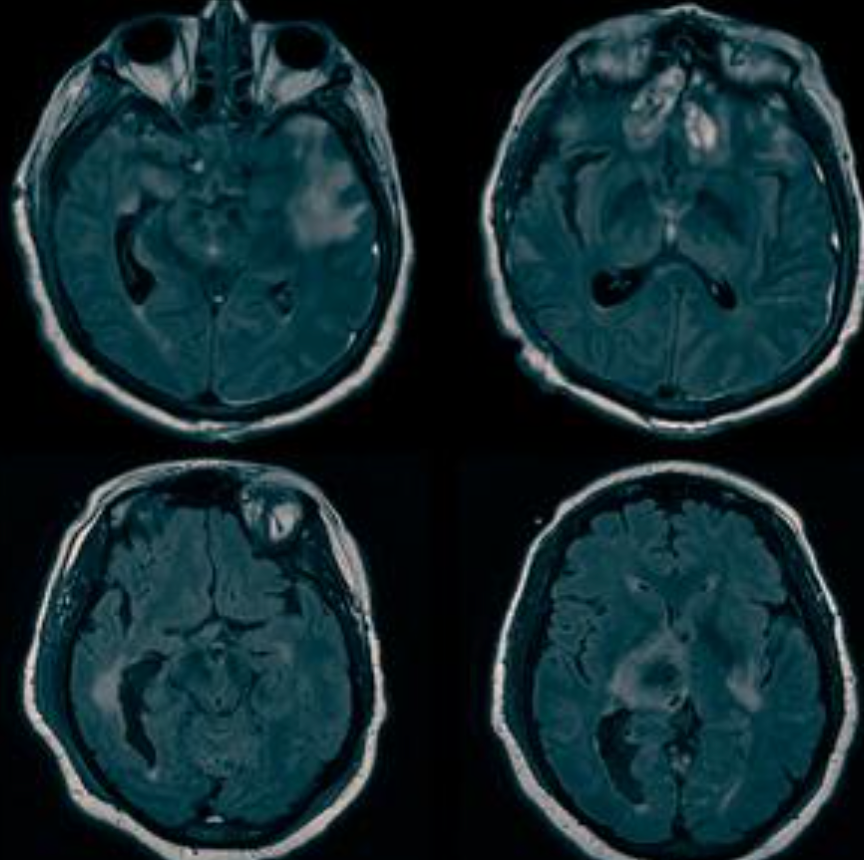

Neuroscientists at the University of California, Berkeley recorded EEG (electrical) activity from two areas of the brain as patients listened to the Pink Floyd song, “Another Brick in the Wall, Pt. 1.”

They captured the electrical activity of specific brain regions on the surface of the brain related to attributes of the music—tone, rhythm, harmony and words—to see if they could reconstruct what the patient was hearing.

Using AI software, they were able to reconstruct the song from 29 brain recordings a decade later—the first (known) time a song has been reconstructed from intracranial EEG recordings.

The phrase “All in all it was just a brick in the wall” comes through recognizably, with rhythms intact and the words muddy, but decipherable.

“Our findings show the feasibility of applying predictive modeling on short datasets acquired in single patients, paving the way for adding musical elements to brain–computer interface (BCI) applications,” the researchers note.

The musicality of speech

“For people who have trouble communicating, whether because of stroke or paralysis, such recordings from electrodes on the brain surface could help reproduce the musicality of speech that’s missing from today’s robot-like reconstructions,” the researchers suggest.

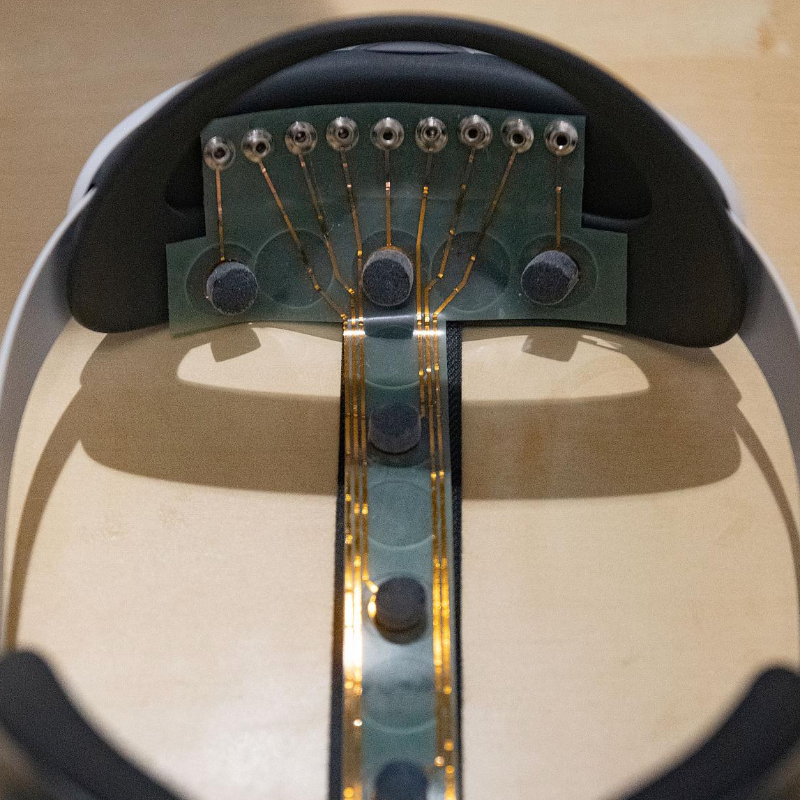

As brain recording techniques improve, it may be possible someday to make such recordings without opening the brain, perhaps using sensitive electrodes attached to the scalp. (See “How To Measure Your Brain Activity Using A EEG Sensor Mounted On A VR Headset.“)

“Currently, scalp EEG can measure brain activity to detect an individual letter from a stream of letters, but the approach takes at least 20 seconds to identify a single letter, making communication effortful and difficult,” said Robert Knight, a neurologist and UC Berkeley professor of psychology in the Helen Wills Neuroscience Institute.

Citation: Bellier L, Llorens A, Marciano D, Gunduz A, Schalk G, Brunner P, et al. (2023) Music can be reconstructed from human auditory cortex activity using nonlinear decoding models. PLoS Biol 21(8): e3002176. https://doi.org/10.1371/journal.pbio.3002176 (open-access)

Let us know your thoughts! Sign up for a Mindplex account now, join our Telegram, or follow us on Twitter.

.png)

.png)

.png)

0 Comments

0 thoughts on “Neuroscientists decode Pink Floyd song from brain recordings”