Let us know your thoughts! Sign up for a Mindplex account now, join our Telegram, or follow us on Twitter.

Boolformer: The First Transformer Architecture Trained to Perform End-to-End Symbolic Regression of Boolean Functions

One of the many domains where deep neural networks, particularly the Transformer types, are anticipated to achieve their next breakthrough is in the realm of scientific exploration. This is due to their demonstrated proficiency in areas such as computer vision and language modeling, where they have already demonstrated notable success. However, these neural networks seem to be limited in their ability to perform logic tasks. These tasks, which could range from traditional vision or language tasks whose input has a combinatorial nature, seem to make representative data sampling challenging. This has motivated the machine learning community to heavily focus on reasoning tasks, including explicit tasks in the logical domain (like arithmetic and algebra, algorithmic CLRS or LEGO), or implicit reasoning in other modes (such as Pointer Value Retrieval or Clevr for vision models, LogiQA and GSM8K for language models).

Since these efforts continue to be difficult for Standard Transformer Structures, it is only natural to investigate whether they may be managed more efficiently with alternative methods, such as making better use of the Boolean nature of the task. In this regard, the process of Training transformers leads to an undesirable generalization, and this in turn makes interpretability challenging. This raises the question of how to improve generalization and interoperability of these Transformer models. But research by a team from Apple and EPFL seems to have found a breakthrough which can answer that question. They have come up with the Boolformer, the first neural network of the Transformer design to solve problems in symbolic logic. The Boolformer can predict compactcompcat formulas for complex functions which were not seen during training, thus generalizing consistently to functions and data that are more sophisticated than those during the training.

The boolformer predicts Boolean formula, which can be seen as a symbolic expression of the 3 basic logic gates: AND, OR and NOT. The model is trained with a set of training examples, which are synthetically created functions. The truth table of the functions acts as input and their formula used as targets. This setup, with control of the data generation process helps with gaining both generalization and interpretability. The researchers from Apple and EFPL have demonstrated the powerful performance of this approach in both theoretical and real world settings, and they also lay the foundation for future advancements in this area.

The research by the team has made several contributions. By training transformers over synthetic datasets to perform end-to-end symbolic regression of boolean regression, researchers show that the Boolformer can predict a compact formula when given a full truth table of unseen function. The researchers also demonstrate that the Boolformer can handle noisy and incomplete data, by giving as inputs truth tables with flipped bits and irrelevant variables. Not only this, but they have evaluated the Boolformer with various real-world binary classification tasks from the PMLB database, and show that it is competitive with classic machine learning approaches like Random Forests while providing interpretable predictions. They have also applied the Model on the well-studied task of modeling gene-regulatory networks (GRNs) in biology. They demonstrate that the Boolformer is competitive with current state-of-the-art approaches, and that it even has inference time that is several times faster than the other methods.

Their code and models are open source and available to the public, which can be found on their github. They have made sure that anyone who wants to contribute to their work is easily set-up and starts work. Do check their work.

There seem to be some constraints that point to new areas for research however. First, the quadratic cost of self-attention limits the model’s effectiveness on high-dimensional functions and big datasets, which caps the number of input points at one thousand. Second, because the logical functions of the training sets did not include the XOR gate explicitly, the model has been limited in the compactness of the formulas it predicts and in its ability to express complex formulas such as parity functions. This limitation came due to the simplification process used during the generation procedure. The process required rewriting the XOR gate in terms of AND, OR and NOT. Adapting the production of simplified formulas consisting of XOR gates as well as operators with higher parity is left as a future effort by the research team. And thirdly the formulas predicted by the model are only single-output functions and gates with a fan-out of one (Multi output functions are predicted independently component-wise).

In conclusion, The Boolformer is a new breakthrough in the field of Machine Learning, which helps in the advancement of the field, making machine learning more accessible, efficient and performative, as well as unlocking the potential of AI in newer domains and in the process helping the advancement of science and knowledge.

Do not forget to check out the paper and their github.

Let us know your thoughts! Sign up for a Mindplex account now, join our Telegram, or follow us on Twitter.

Transforming Industrial Panels into Affordable Housing | Highlights from S2EP2

Sanitation Danger at Burning Man | Highlights from S2EP2

Breaking Ground in 3D Modeling: Unveiling 3D-GPT

Researchers from the Australian National University, University of Oxford, and Beijing Academy of Artificial Intelligence have collaboratively developed a groundbreaking framework known as 3D-GPT for instruction-driven 3D modeling.

The framework leverages large language models (LLMs) to dissect procedural 3D modeling tasks into manageable segments and appoints the appropriate agent for each task.

The paper begins by highlighting the increasing use of generative AI systems in various fields such as medicine, news, politics, and social interaction. These systems are becoming more widespread and are used to create content across different formats. However, as these technologies become more prevalent and integrated into various applications, concerns arise regarding public safety. Consequently, evaluating the potential risks posed by generative AI systems is becoming a priority for AI developers, policymakers, regulators, and civil society.

To address this issue, the researchers introduce 3D-GPT, a framework that utilizes large language models (LLMs) for instruction-driven 3D modeling. The framework positions LLMs as proficient problem solvers that can break down the procedural 3D modeling tasks into accessible segments and appoint the apt agent for each task.

The 3D-GPT framework integrates three core agents: the task dispatch agent, the conceptualization agent, and the modeling agent. They work together to achieve two main objectives. First, they enhance initial scene descriptions by evolving them into detailed forms while dynamically adapting the text based on subsequent instructions. Second, they integrate procedural generation by extracting parameter values from enriched text to effortlessly interface with 3D software for asset creation.

The task dispatch agent plays a crucial role in identifying the required functions for each instructional input. For instance, when presented with an instruction such as “translate the scene into a winter setting”, it pinpoints functions like add snow layer() and update trees(). This pivotal role played by the task dispatch agent is instrumental in facilitating efficient task coordination between the conceptualization and modeling agents. From a safety perspective, the task dispatch agent ensures that only appropriate and safe functions are selected for execution, thereby mitigating potential risks associated with the deployment of generative AI systems.

The conceptualization agent enriches the user-provided text description into detailed appearance descriptions. After the task dispatch agent selects the required functions, we send the user input text and the corresponding function-specific information to the conceptualization agent and request augmented text. In terms of safety, the conceptualization agent plays a vital role in ensuring that the enriched text descriptions accurately represent the user’s instructions, thereby preventing potential misinterpretations or misuse of the 3D modeling functions.

The modeling agent deduces the parameters for each selected function and generates Python code scripts to invoke Blender’s API. The generated Python code script interfaces with Blender’s API for 3D content creation and rendering. Regarding safety, the modeling agent ensures that the inferred parameters and the generated Python code scripts are safe and appropriate for the selected functions. This process helps to avoid potential safety issues that could arise from incorrect parameter values or inappropriate function calls.

The researchers conducted several experiments to showcase the proficiency of 3D-GPT in consistently generating results that align with user instructions. They also conducted an ablation study to systematically examine the contributions of each agent within their multi-agent system.

Despite its promising results, the framework has several limitations. These include limited curve control and shading design, dependence on procedural generation algorithms, and challenges in processing multi-modal instructions. Future research directions include LLM 3D fine-tuning, autonomous rule discovery, and multi-modal instruction processing.

In summary, the research paper introduces a novel framework that holds promise in enhancing human-AI communication in the context of 3D design and delivering high-quality results.

Let us know your thoughts! Sign up for a Mindplex account now, join our Telegram, or follow us on Twitter.

We Need AI Sourcecode To Be Transparent | Highlights from S2EP2

Transcendent questions on the future of AI: New starting points for breaking the logjam of AI tribal thinking

Going nowhere fast

Imagine you’re listening to someone you don’t know very well. Perhaps you’ve never even met in real life. You’re just passing acquaintances on a social networking site. A friend of a friend, say. Let’s call that person FoF.

FoF is making an unusual argument. You’ve not thought much about it before. To you, it seems a bit subversive.

You pause. You click on FoF’s profile, and look at other things he has said. Wow, one of his other statements marks him out as an apparent supporter of Cause Z. (That’s a cause I’ve made up for the sake of this fictitious dialog.)

You shudder. People who support Cause Z have got their priorities all wrong. They’re committed to an outdated ideology. Or they fail to understand free market dynamics. Or they’re ignorant of the Sapir-Whorf hypothesis. Whatever. There’s no need for you to listen to them.

Indeed, since FoF is a supporter of Cause Z, you’re tempted to block him. Why let his subversive ill-informed ideas clutter up your tidy filter bubble?

But today, you’re feeling magnanimous. You decide to break into the conversation, with your own explanation of why Cause Z is mistaken.

In turn, FoF finds your remarks unusual. First, it’s nothing to do with what he had just been saying. Second, it’s not a line of discussion he has heard before. To him, it seems a bit subversive.

FoF pauses. He clicks on your social media profile, and looks at other things you’ve said. Wow. One of your other statements marks you out as an apparent supporter of Clause Y.

FoF shudders. People who support Cause Y have got their priorities all wrong.

FoF feels magnanimous too. He breaks into your conversation, with his explanation as to why Cause Y is bunk.

By now, you’re exasperated. FoF has completely missed the point you were making. This time you really are going to block him. Goodbye.

The result: nothing learned at all.

And two people have had their emotions stirred up in unproductive ways. Goodness knows when and where each might vent their furies.

Trying again

We’ve all been the characters in this story on occasion. We’ve all missed opportunities to learn, and, in the process, we’ve had our emotions stirred up for no good reason.

Let’s consider how things could have gone better.

The first step forward is a commitment to resist prejudice. Maybe FoF really is a supporter of Cause Z. But that shouldn’t prejudge the value of anything else he also happens to say. Maybe you really are a supporter of Cause Y. But that doesn’t mean FoF should jump to conclusions about other opinions you offer.

Ideally, ideas should be separated from the broader philosophies in which they might be located. Ideas should be assessed on their own merits, without regard to who first advanced them – and regardless of who else supports them.

In other words, activists must be ready to set aside some of their haste and self-confidence, and instead adopt, at least for a while, the methods of the academy rather than the methods of activism.

That’s because, frankly, the challenges we’re facing as a global civilization are so complex as to defy being fully described by any one of our worldviews.

Cause Z may indeed have useful insights – but also some nasty blindspots. Likewise for Cause Y, and all the other causes and worldviews that gather supporters from time to time. None of them have all the answers.

On a good day, FoF appreciates that point. So do you. Both of you are willing, in principle, to supplement your own activism with a willingness to assess new ideas.

That’s in principle. The practice is often different.

That’s not just because we are tribal beings – having inherited tribal instincts from our prehistoric evolutionary ancestors.

It’s also because the ideas that are put forward as starting points for meaningful open discussions all too often fail in that purpose. They’re intended to help us set aside, for a while, our usual worldviews. But all too often, they have just a thin separation from well-known ideological positions.

These ideas aren’t sufficiently interesting in their own right. They’re too obviously a proxy for an underlying cause.

That’s why real effort needs to be put into designing what can be called transcendent questions.

These questions are potential starting points for meaningful non-tribal open discussions. These questions have the ability to trigger a suspension of ideology.

But without good transcendent questions, the conversation will quickly cascade back down to its previous state of logjam. That’s despite the good intentions people tried to keep in mind. And we’ll be blocking each other – if not literally, then mentally.

The AI conversation logjam

Within discussions of the future of AI, some tribal positions are well known –

One tribal group is defined by the opinion that so-called AI systems are not ‘true’ intelligence. In this view, these AI systems are just narrow tools, mindless number crunchers, statistical extrapolations, or stochastic parrots. People in this group delight in pointing out instances where AI systems make grotesque errors.

A second tribal group is overwhelmed with a sense of dread. In this view, AI is on the point of running beyond control. Indeed, Big Tech is on the point of running beyond control. Open-source mavericks are on the point of running beyond control. And there’s little that can be done about any of this.

A third group is focused on the remarkable benefits that advanced AI systems can deliver. Not only can such AI systems solve problems of climate change, poverty and malnutrition, cancer and dementia, and even aging. Crucially, they can also solve any problems that earlier, weaker generations of AI might be on the point of causing. In this view, it’s important to accelerate as fast as possible into that new world.

Crudely, these are the skeptics, the doomers, and the accelerationists. Sadly, they often have dim opinions of each other. When they identify a conversation partner as being a member of an opposed tribe, they shudder.

Can we find some transcendent questions, which will allow people with sympathies for these various groups to overcome, for a while, their tribal loyalties, in search of a better understanding? Which questions might unblock the AI safety conversation logjam?

A different starting point

In this context, I want to applaud Rob Bensinger. Rob is the communications lead at an organization called MIRI (the Machine Intelligence Research Institute).

(Just in case you’re tempted to strop away now, muttering unkind thoughts about MIRI, let me remind you of the commitment you made, a few paragraphs back, not to prejudge an idea just because the person raising it has some associations you disdain.)

(You did make that commitment, didn’t you?)

Rob has noticed the same kind of logjam and tribalism that I’ve just been talking about. As he puts it in a recent article:

Recent discussions of AI x-risk in places like Twitter tend to focus on “are you in the Rightthink Tribe, or the Wrongthink Tribe?” Are you a doomer? An accelerationist? An EA? A techno-optimist?

I’m pretty sure these discussions would go way better if the discussion looked less like that. More concrete claims, details, and probabilities; fewer vague slogans and vague expressions of certainty.

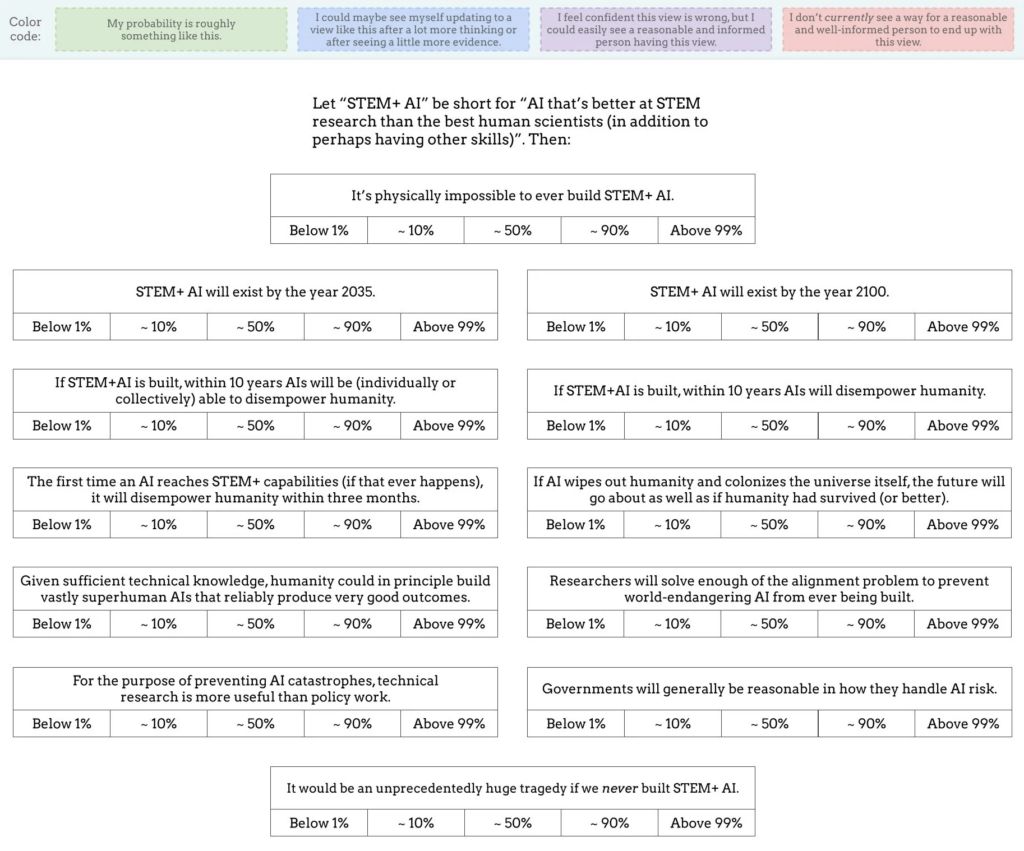

Following that introduction, Rob introduces his own set of twelve questions, as shown in the following picture:

For each of the twelve questions, readers are invited, not just to give a forthright ‘yes’ or ‘no’ answer, but to think probabilistically. They’re also invited to consider which range of probabilities other well-informed people with good reasoning abilities might plausibly assign to each answer.

It’s where Rob’s questions start that I find most interesting.

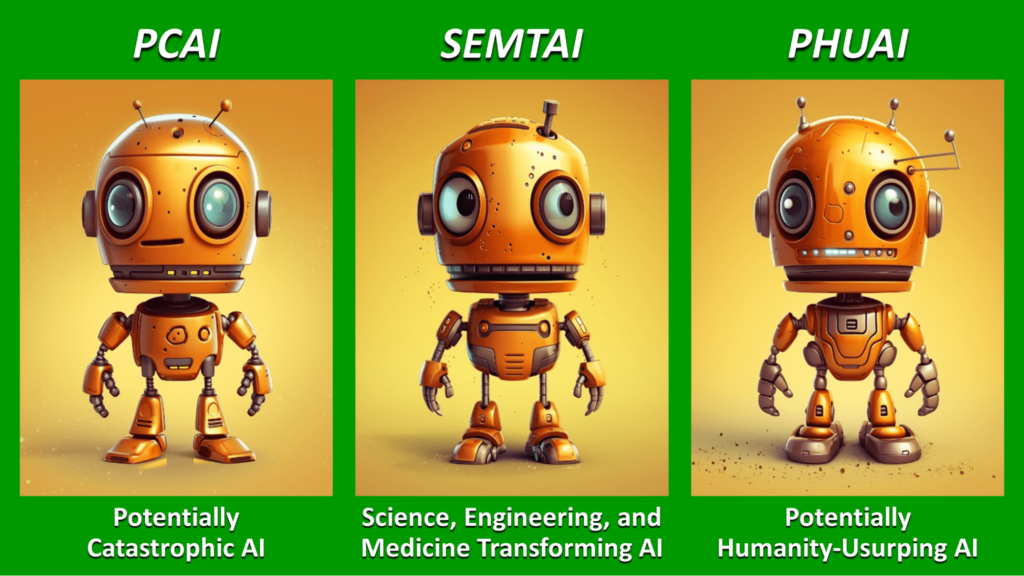

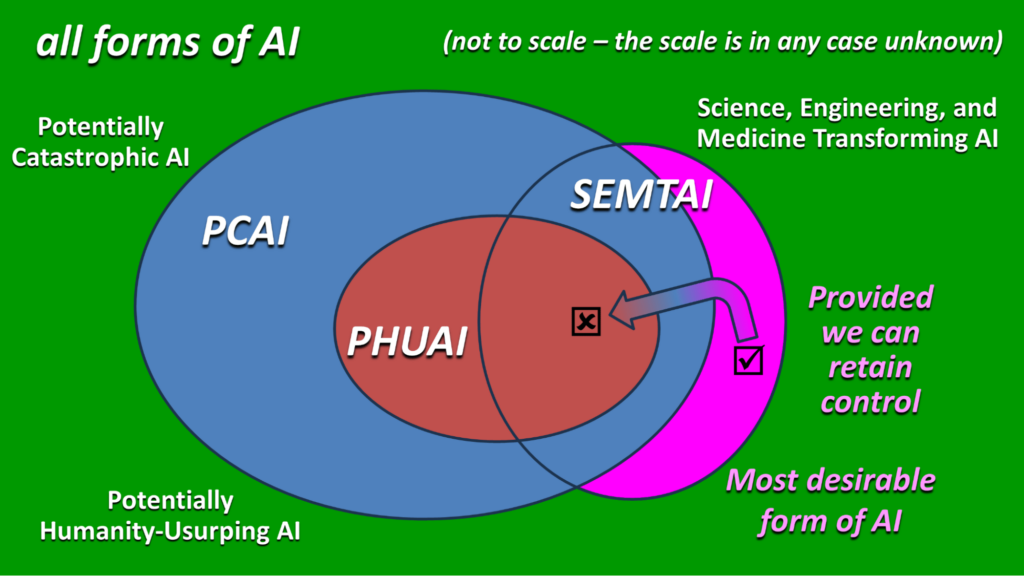

PCAI, SEMTAI, and PHUAI

All too often, discussions about the safety of future AI systems fail at the first hurdle. As soon as the phrase ‘AGI’ is mentioned, unhelpful philosophical debates break out.That’s why I have been suggesting new terms, such as PCAI, SEMTAI, and PHUAI:

I’ve suggested the pronunciations ‘pea sigh’, ‘sem tie’, and ‘foo eye’ – so that they all rhyme with each other and, also, with ‘AGI’. The three acronyms stand for:

- Potentially Catastrophic AI

- Science, Engineering, and Medicine Transforming AI

- Potentially Humanity-Usurping AI.

These concepts lead the conversation fairly quickly to three pairs of potentially transcendent questions:

- “When is PCAI likely to be created?” and “How could we stop these potentially catastrophic AI systems from being actually catastrophic?”

- “When is SEMTAI likely to be created?” and “How can we accelerate the advent of SEMTAI without also accelerating the advent of dangerous versions of PCAI or PHUAI?”

- “When is PHUAI likely to be created?” and “How could we stop such an AI from actually usurping humanity into a very unhappy state?”

The future most of us can agree as being profoundly desirable, surely, is one in which SEMTAI exists and is working wonders, uplifting the disciplines of science, engineering, and medicine.

If we can gain these benefits without the AI systems being “fully general” or “all-round superintelligent” or “independently autonomous, with desires and goals of its own”, I would personally see that as an advantage.

But regardless of whether SEMTAI actually meets the criteria various people have included in their own definitions of AGI, what path gives humanity SEMTAI without also giving us PCAI or even PHUAI? This is the key challenge.

Introducing ‘STEM+ AI’

Well, I confess that Rob Bensinger didn’t start his list of potentially transcendent questions with the concept of SEMTAI.

However, the term he did introduce was, as it happens, a slight rearrangement of the same letters: ‘STEM+ AI’. And the definition is pretty similar too:

Let ‘STEM+ AI’ be short for “AI that’s better at STEM research than the best human scientists (in addition to perhaps having other skills).

That leads to the first three questions on Rob’s list:

- What’s the probability that it’s physically impossible to ever build STEM+ AI?

- What’s the probability that STEM+ AI will exist by the year 2035?

- What’s the probability that STEM+ AI will exist by the year 2100?

At this point, you should probably pause, and determine your own answers. You don’t need to be precise. Just choose between one of the following probability ranges:

- Below 1%

- Around 10%

- Around 50%

- Around 90%

- Above 99%

I won’t tell you my answers. Nor Rob’s, though you can find them online easily enough from links in his main article. It’s better if you reach your own answers first.

And recall the wider idea: don’t just decide your own answers. Also consider which probability ranges someone else might assign, assuming they are well-informed and competent in reasoning.

Then when you compare your answers with those of a colleague, friend, or online acquaintance, and discover surprising differences, the next step, of course, is to explore why each of you have reached your conclusions.

The probability of disempowering humanity

The next question that causes conversations about AI safety to stumble: what scales of risks should we look at? Should we focus our concern on so-called ‘existential risk’? What about ‘catastrophic risk’?

Rob seeks to transcend that logjam too. He raises questions about the probability that a STEM+ AI will disempower humanity. Here are questions 4 to 6 on his list:

- What’s the probability that, if STEM+AI is built, then AIs will be (individually or collectively) able, within ten years, to disempower humanity?

- What’s the probability that, if STEM+AI is built, then AIs will disempower humanity within ten years?

- What’s the probability that, if STEM+AI is built, then AIs will disempower humanity within three months?

Question 4 is about capability: given STEM+ AI abilities, will AI systems be capable, as a consequence, to disempower humanity?

Questions 5 and 6 move from capability to proclivity. Will these AI systems actually exercise these abilities they have acquired? And if so, potentially how quickly?

Separating the ability and proclivity questions is an inspired idea. Again, I invite you to consider your answers.

Two moral evaluations

Question 7 introduces another angle, namely that of moral evaluation:

- 7. What’s the probability that, if AI wipes out humanity and colonizes the universe itself, the future will go about as well as if humanity had survived (or better)?

The last question in the set – question 12 – also asks for a moral evaluation:

- 12. How strongly do you agree with the statement that it would be an unprecedentedly huge tragedy if we never built STEM+ AI?

Yet again, these questions have the ability to inspire fruitful conversation and provoke new insights.

Better technology or better governance?

You may have noticed I skipped numbers 8-11. These four questions may be the most important on the entire list. They address questions of technological possibility and governance possibility. Here’s question 11:

- 11. What’s the probability that governments will generally be reasonable in how they handle AI risk?

And here’s question 10:

- 10. What’s the probability that, for the purpose of preventing AI catastrophes, technical research is more useful than policy work?

As for questions 8 and 9, well, I’ll leave you to discover these by yourself. And I encourage you to become involved in the online conversation that these questions have catalyzed.

Finally, if you think you have a better transcendent question to drop into the conversation, please let me know!

Let us know your thoughts! Sign up for a Mindplex account now, join our Telegram, or follow us on Twitter.

Secure Logging for AI Accountability | Highlights from S2EP2

The Human Flaw: The Power Struggle to Control God

In the last few weeks, we’ve been treated to a wonderful display of backbiting, intrigue, sentimental hogwash, and brazen power grabbing as the leadership of OpenAI embarked upon a delicious bout of internecine warfare. Altman and Brock were out, then in again, three different CEOs stepped up. Cryptic messages, dark secrets, panicked whispers of a runaway AGI – and Microsoft looming over the show, threatening to buy the whole auditorium and kick out the audience.

An Uneasy Alliance

A solution, it seems, has been found. An entente cordiale between the power players, with Altman restored as head of the board and Microsoft taking an ‘observer’ seat. Ilya, whose Iago-like poisoning of the board against Altman, was left posting heart emojis on Twitter and celebrating the return of the man he tried to stab in the front.

Overall, OpenAI’s staff, at least publicly, looked like college students playing at realpolitik before realising these games have consequences, and that powerful forces like Microsoft could scrub OpenAI from the songsheets of the future. And you couldn’t help but wonder at the vaguely cultish, student dorm vibes of it all, and begin to interrogate who exactly is in command of gestating what some say could be the most powerful and society-changing technology ever created.

Drama Sells

There is so much wrong with this whole sordid affair, it’s hard to know where to begin. Mostly, it’s the depressing despair at just how two-faced literally everyone in the corporate world is – publicly and without shame. The complete lack of professionalism displayed on a grand scale at one of the world’s most important companies is cause for alarm. Altman’s ousting was visibly a blatant grab for power, and Altman was quite willing to sell the whole project down the river and gift the vicious zaibatsu that is Microsoft the keys to the kingdom so that he could remain in charge – and continue to prance about as the figurehead of the AI revolution. It was galling.

It’s sad, it’s irresponsible, and worst of all everyone involved is arguing from a position of ‘principle’ that, to this scathing observer, is utterly asinine and false. Make no mistake: this is about money, and this is about power, and – curiously – this is about advertising. The boat-rocking hasn’t tipped the crew overboard, but it’s certainly made some waves. ‘Dangerous new model’, ‘scary powerful’ – is what they say about Q*. Well, you sell the sizzle, not the steak, don’t you? It reestablishes OpenAI at the front of the minds of everyone interested in AI just as the next wave of GPT-products come online.

Speed Up or Slow Down

Of course, I might be wrong. And, even if I’m not, there is a clear schism developing in OpenAI – and across the sector as a whole – about the balance between commercial gain and building AI in a manner ‘that benefits all humanity’ (As OpenAI’s mission statement reads. Don’t be evil, etc. – as Google used to say).

There are the accelerationists, who believe AI will rebuild society and we should strain every sinew to advancing and deploying it in everyday life. There are the decelerationists, who believe the risks associated with unbridled AI threaten to not so much rebuild society, as tear it apart. Altman wants to go faster, Ilya wants to slow down. Altman wants to sell, sell, sell. Ilya wants to build the right way.

For what it’s worth, the decelerationists are wrong. We humans are a pack of Pandoras, and we will open the box. If you don’t do it, someone else will. Capitalism allows for nothing else. If you want to decelerate, fine, resign and go live out what’s left of your normal days on an island. You can’t stop this, Ilya. I applaud principled stances – it’s just a shame that stance was achieved through an attempted coup, and abandoned as soon as they realised it wasn’t going to work.

But for the Altmans out there, or the Altman-fans. Don’t be too quick to think this victory for accelerationism, for commercialism, and for Microsoft (the real winner) is some holy mission to advance AI. It’s only a matter of time before AI-bots are flogged to torment our grandmothers on social media with targeted ads.

Creation Protocols

Fully-aligned AGI is a powerful dream. A benevolent, technipotent, tireless agent that works to administrate, advance, and protect humanity. The question of alignment is essential, especially when it comes to manufacturing intelligences that have any type of independent ‘will’ that can act beyond their given purpose. Just as essential, though, is the problematic use of ‘dumb’ LLMs to proliferate marketing messaging, sales calls, social interactions – and how their continued use could destabilise the fabric of society. OpenAI and other AI manufacturers must contend with both questions. But it’s easy to guess which they will go for when answering to their shareholders.

There is a human flaw. We are building new gods, but they can’t escape the stains of our morality. We humans can’t help but fight over hierarchy and power. We can’t help but want more and more. It would be a cosmic joke if, just as we approach a singularity which could help us transcend as a species, our ape-like tensions and fireside bickering bring it all crashing down. Worse, we may end up creating a promethean monster, aided and abetted by corporations who don’t care what they do. It will be up to us to forgive them.

Let us know your thoughts! Sign up for a Mindplex account now, join our Telegram, or follow us on Twitter.

.png)

.png)

.png)